Core Web Vitals, Real Users, and What “Good Performance” Actually Means

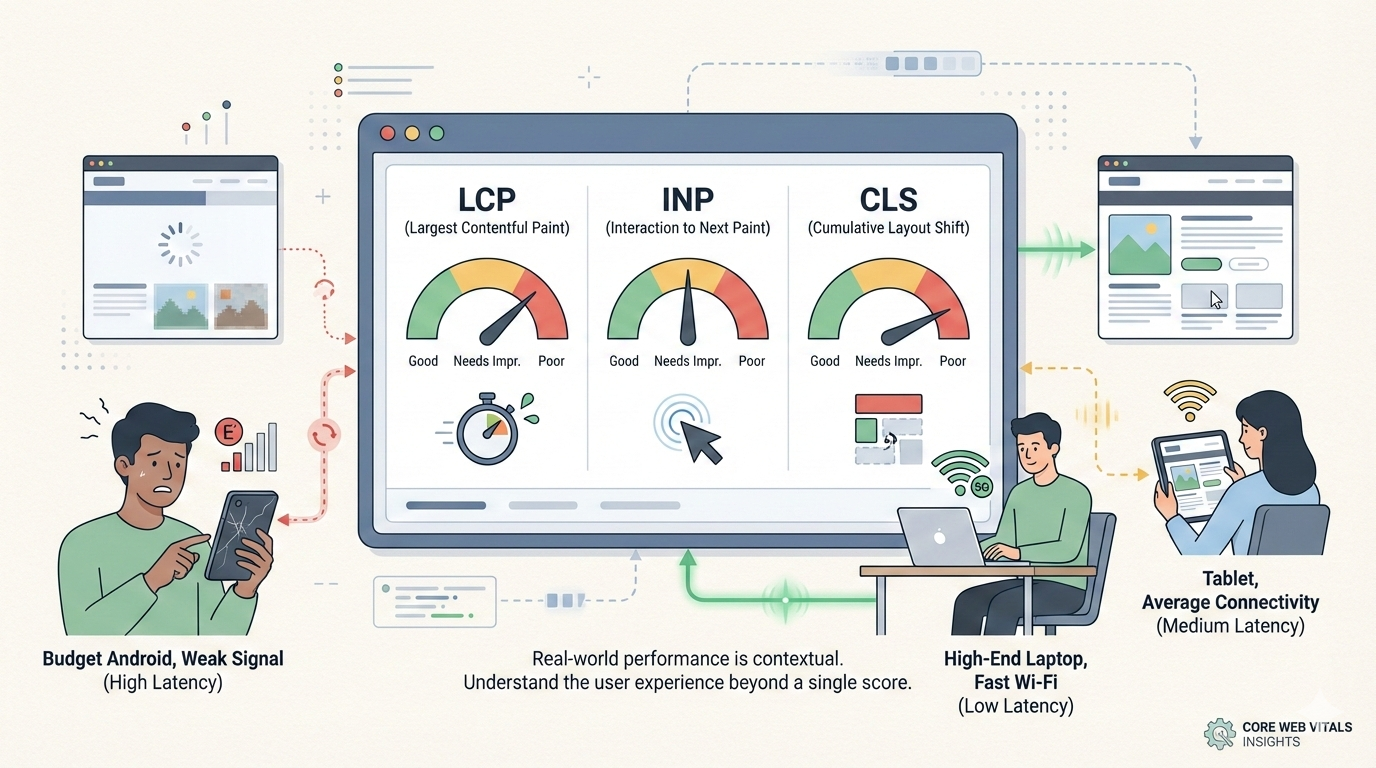

Core Web Vitals were meant to simplify web performance. Instead, they exposed a deeper truth:

Performance is contextual, uneven, and impossible to judge from a single score.

If you’ve ever seen this contradiction:

- Lighthouse score: 95–100

- Google Search Console: Core Web Vitals assessment failed

Nothing is broken. You’re just measuring two different realities.

This article explains what Core Web Vitals actually measure, why geography and network quality dominate the numbers, why lab tools routinely mislead teams, how the web is actually performing today, and what “optimal” speed really means in the market — not in theory.

What Core Web Vitals Really Measure

Core Web Vitals aim to capture how a site feels to real users, not how it behaves in a perfect test environment.

They focus on three signals:

- Largest Contentful Paint (LCP) — when the page looks ready (≤ 2.5s is good)

- Interaction to Next Paint (INP) — how responsive the page feels during interaction (≤ 200ms is good)

- Cumulative Layout Shift (CLS) — whether the layout behaves predictably (≤ 0.1 is good)

To pass, 75% of real users must experience Good values for all three, aggregated over a rolling 28-day window.

That single rule explains most confusion.

You are not judged by your fastest users.

You are judged by your slowest meaningful users.

Google’s Global Thresholds (The Ideal)

Google’s thresholds are universal and apply at the 75th percentile of real user visits:

- LCP ≤ 2.5s

- INP ≤ 200ms

- CLS ≤ 0.1

A site only “passes Core Web Vitals” if all three are good for at least 75% of page views.

These are ideals. Ideals are not the same as market reality.

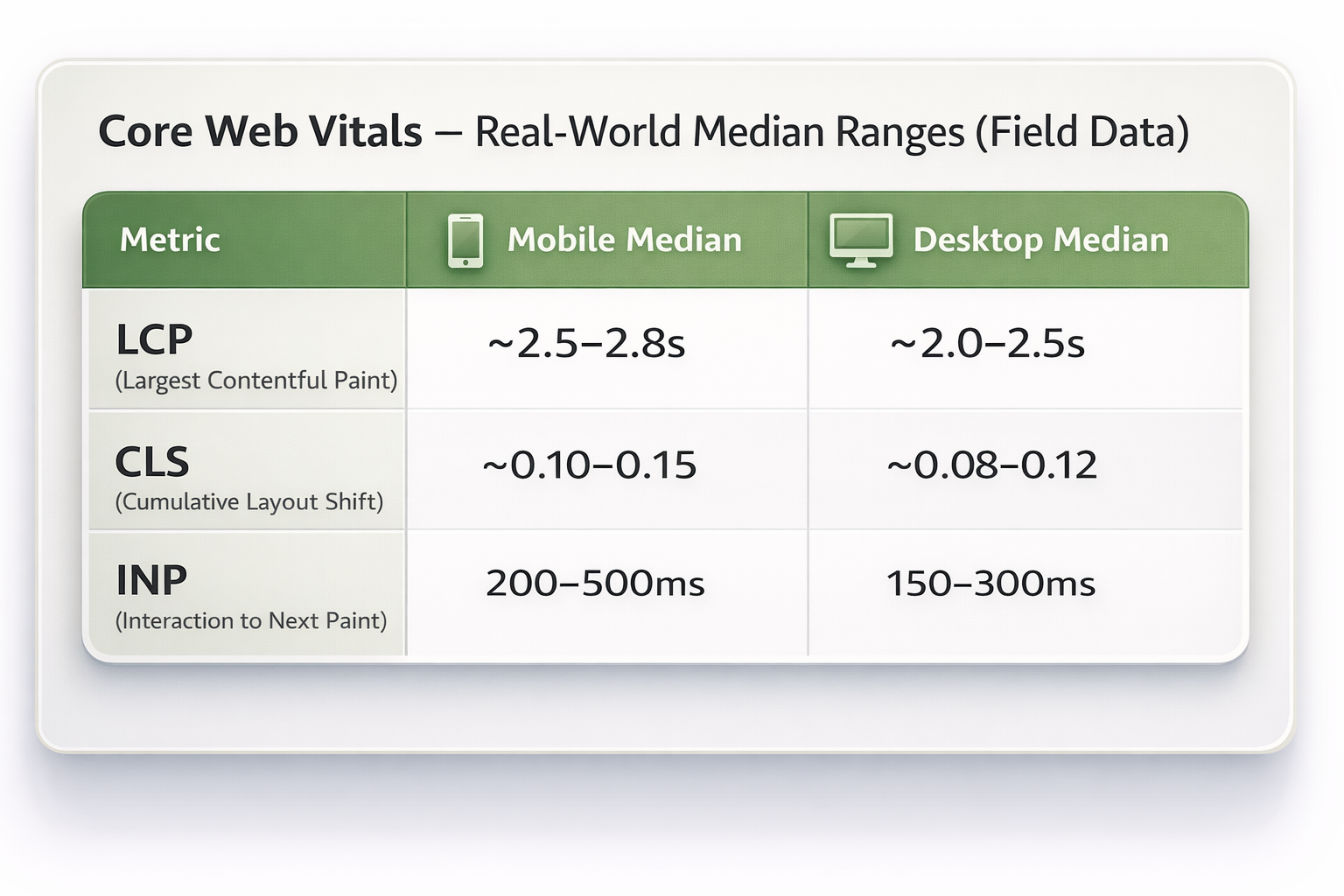

How the Web Is Actually Performing (Reality Check)

Large-scale studies across hundreds of thousands of sites in 2024–2025 show a consistent picture:

- Only ~40–50% of websites pass all Core Web Vitals on mobile

- Desktop performs slightly better at ~46–54%

- INP is now the hardest metric, especially on mobile due to JavaScript-heavy apps

If your site passes all three vitals on mobile, you’re already ahead of roughly half the web.

Geography and Network Quality Matter More Than Teams Expect

Performance is not evenly distributed across the internet.

A user on:

- a budget Android device

- congested mobile data

- thermal throttling

- background apps

will experience your site very differently from someone on a high-end laptop with fiber.

Chrome UX Report data shows massive variation in Core Web Vitals by country, network quality, and device tier. Google does not normalize for this.

From Google’s perspective:

If users in your market experience slowness, that is the experience.

This is why globally distributed, mobile-first audiences often struggle to pass Core Web Vitals despite solid engineering.

Lab Scores vs Field Data: Why Tools Disagree

Most teams rely on lab tools such as Lighthouse, GTmetrix, and the lab tab of PageSpeed Insights.

These tools are synthetic:

- fixed device profiles

- simulated networks

- no real user behavior

They are excellent for debugging. They are terrible as truth oracles.

Google rankings use field data collected from real users over time. That’s why a page can score 100 in Lighthouse and still fail Core Web Vitals.

Nothing is broken. The tools are answering different questions.

What “Optimal” Speed Means in the Market

Optimal does not mean perfect scores.

It means better than your market and competitors.

Most of the web still hasn’t hit Google’s ideal thresholds, especially on mobile. Market leadership comes from outperforming your vertical, not chasing theoretical perfection.

Practical Benchmarks by Category

Retail / E-commerce

Market reality: ~50–60% of retail sites pass Core Web Vitals on mobile.

Competitive targets:

- Mobile LCP: ≤ 2.2–2.5s on home, PLP, PDP

- INP: ≤ 200ms on add-to-cart and checkout (≤ 250ms still competitive)

- CLS: ≤ 0.1 on product and checkout pages

Content / Publishing / Media

Market reality: Ads and embeds push LCP and CLS up.

Competitive targets:

- LCP: ≤ 2.5–2.8s on article pages

- INP: ≤ 200–250ms for navigation and interactions

- CLS: ≤ 0.1 by reserving ad space early

SaaS / Web Apps / Dashboards

Market reality: LCP is often acceptable. INP is the main failure point.

Competitive targets:

- LCP: ≤ 2.5s for first meaningful screen

- INP: ≤ 200ms on core actions (≤ 250–300ms realistic for complex UIs)

- CLS: ≤ 0.1 is usually achievable

How Much Do Core Web Vitals Actually Affect SEO?

Less than most people think.

Google has been clear:

- Core Web Vitals are a tie-breaker, not a primary ranking factor

- Content relevance and intent dominate

- Great content with “good enough” performance usually wins

You can’t performance-optimize your way out of weak content.

How to Measure Performance the Right Way

Strong teams use layers, not a single score:

- Field data first — Search Console and PSI field data show where users struggle

- RUM next — segment by geography, device, network, funnel, and revenue impact

- Lab tools last — use Lighthouse and DevTools to debug causes

Field tells you what hurts. Lab tells you why.

What a “Good Website” Actually Means in 2025–2026

A good website today:

- accepts geography and hardware limits

- optimizes hardest where business impact is highest

- uses Core Web Vitals as guardrails, not religion

- measures real experience, not synthetic perfection

- prioritizes humans over dashboards

Where Total Website Speed Still Fits In

Total speed still matters. It’s just no longer one magic number.

As total load time increases:

- bounce probability jumps sharply from 1s → 3s

- it rises dramatically again at 5–10 seconds

Even if Core Web Vitals pass, slow-feeling experiences still lose users.

Core Web Vitals measure specific UX moments. They do not fully capture:

- Time to First Byte (TTFB)

- full content readiness

- long tasks and background execution

Improving backend speed, delivery, and JavaScript discipline usually improves both total speed and Core Web Vitals.

“But My Site Loads in ~2 Seconds”

This is the common confusion.

Modern sites often:

- render meaningful content fast

- load analytics and ads asynchronously

- feel instant on repeat visits

Core Web Vitals judge:

- cold starts

- slower devices

- weaker networks

- 75th percentile behavior

So a site can feel fast and still fail CWV.

Nothing is broken. You’re seeing the real-world worst-case path.

The Right Speed Goal

For SEO:

- page experience supports rankings; it doesn’t replace relevance

For product and business:

- aim for “fast enough that users barely wait”

- sub-3s end-to-end on mobile for key pages

- high-intent paths should be faster

The right model is not: “Pass Core Web Vitals and stop.”

It’s: Optimize end-to-end latency for real users, using Core Web Vitals as guardrails — not the finish line.

Closing Thought

Core Web Vitals are feedback, not a verdict.

Total speed still matters. Interaction quality still matters. Content relevance still matters.

The real measure of success is simple:

Can real humans, on real networks, do what they came to do smoothly and reliably?

Everything else is instrumentation.