Systems Architecture for Brand Growth: Escaping the CAC Trap in the Agentic Era

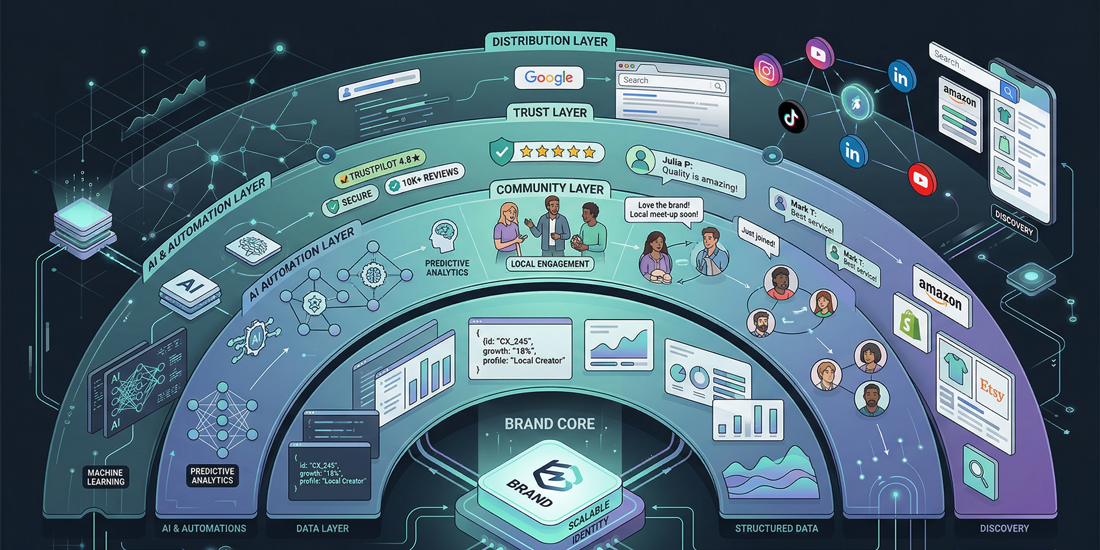

Brand growth now depends on machine readability, local trust, community retention, and operational elasticity.

Brand growth systems are replacing channel tactics because the old acquisition stack is structurally failing. Paid reach is more expensive, organic discovery is being absorbed into AI answer layers, and brands that still behave like a sequence of campaigns are discovering that better ad copy does not fix broken unit economics.

The failure is architectural. Customer acquisition costs expanded across sectors through the last decade, CPC pressure accelerated again in 2024, and generative search interfaces now compress the click path before a user even reaches your site. A modern brand has to behave like a closed-loop system: machine-readable at the data layer, trusted at the local layer, sticky at the community layer, and elastic at the operations layer.

That shift changes the build order. You do not start with campaigns and then add systems later. You engineer the data plane, trust plane, retention loop, and execution layer first, then let acquisition sit on top of an architecture that can actually absorb growth.

Brand Growth Systems Start With Broken Unit Economics

The baseline economics already explain why the legacy playbook is under strain. A healthy growth model still needs an LTV:CAC ratio of at least 3:1. Once acquisition costs rise while retention, trust, and automation remain weak, the margin stack collapses fast.

| Industry Sector | Average CAC | Minimum LTV Required |

|---|---|---|

| SaaS and Technology Providers | $702-$1,200 | $2,106-$3,600 |

| Financial Services and Fintech | $1,450-$4,056 | $4,350-$12,168 |

| E-commerce and Retail | $70-$78 | $210-$234 |

| Home Services | $300 | $900 |

You cannot out-edit this with sharper headlines. The problem sits deeper in the system: discovery is getting more expensive, attribution is getting noisier, and the channels you rent do not compound in your favor.

CAC Inflation Is a Systems Warning

When CAC climbs 60-75 percent across a decade, that is not a temporary campaign problem. It signals that the market has shifted from cheap distribution to expensive competition for attention, while platforms continue to auction the same audience back to you at a premium.

Search No Longer Guarantees Traffic

AI overviews and synthesized answer layers now intercept a meaningful share of informational intent. When the answer engine resolves the question without a click, your content must be machine-usable before it can be human-convincing.

That is why the architectural response matters more than another round of channel optimization.

The Machine-Readable Data Plane for AEO

Answer Engine Optimization is not a copywriting trick. It is a data architecture problem. If an LLM, shopping agent, or answer engine cannot parse your products, locations, and trust signals as explicit entities, your brand becomes invisible at the exact layer where discovery is moving.

Headless Decoupling Makes the Catalog Legible

A monolithic storefront traps inventory, pricing logic, and fulfillment constraints inside presentation-first templates. Move to a composable setup where the commerce backend, content layer, and interface layer are decoupled, and your catalog starts behaving like an addressable data system instead of a page renderer.

For Shopify-led stacks, that usually means treating Shopify as the commerce core while exposing product, pricing, shipping, and availability logic through APIs that both frontend clients and agentic systems can consume cleanly.

Entity Definition Beats Marketing Ambiguity

Generative systems penalize vague adjectives because they cannot operationalize them. “Premium quality” does not help an answer engine choose your product. Exact dimensions, delivery windows, return constraints, locality, ingredient composition, warranty terms, and review signals do.

Use schema.org aggressively across the stack:

Productfor catalog objects and offer statesLocalBusinessfor geographic trust and operating contextFAQPagefor intent-bound answer extractionReviewandAggregateRatingfor explicit credibility signals

Intent-First Content Should Open With the Answer

LLM extraction improves when the page resolves the question early, then supports the answer with structured detail. Open core pages with a 30-60 word factual answer, follow with hierarchical evidence, and avoid forcing the model to infer the main point from marketing prose.

Hyperlocal Moats Outperform Platform Dependence

Centralized marketplaces scale distribution, but they also compress margins and own the customer graph. When one marketplace, ad network, or algorithm becomes the primary route to demand, your growth model inherits a single point of failure.

The stronger alternative is to dominate a specific local node while integrating with open networks that widen discovery without handing over the whole transaction layer.

ONDC Changes the Topology of Commerce

The Open Network for Digital Commerce breaks the marketplace model into interoperable roles. Discovery, order management, and fulfillment no longer have to live inside the same closed platform. That matters for independent brands because it lowers dependence on high-take-rate aggregators and increases protocol-level reach.

Local Trust Still Wins Near-Intent Discovery

Local queries remain one of the few categories where precision beats scale. Accurate business profiles, synchronized directory data, fulfillment boundaries, and service-level clarity create a dense trust node that AI summaries can resolve confidently.

For “near me” and urgent intent, a highly structured local presence frequently beats a national player with weaker local truth. This is not nostalgia for offline retail. It is a data quality advantage.

Geographic Density Creates a Defensible Graph

Hyperlocal trust compounds when product availability, delivery SLA, service radius, and proof signals all point to the same place. That consistency reduces hallucination surface for answer engines and reduces hesitation for buyers.

Community-Led Growth Is Retention Infrastructure

Public social platforms still matter, but the highest-intent discovery often happens in private channels: WhatsApp groups, Discord servers, closed communities, direct messages, and trusted peer recommendations. That is why Community-Led Growth works best when you treat it as owned retention infrastructure rather than as a top-of-funnel social tactic.

Referrals Need to Be Engineered, Not Requested

Referred cohorts usually stay longer and spend more because trust is preloaded into the acquisition event. Build referral logic directly into your applications, checkout flows, loyalty systems, and post-purchase journeys so advocacy becomes a programmable behavior instead of a manual campaign.

If you already run custom Node.js or Rust services for shipping, pricing, or promotions, that is where referral and loyalty logic belongs. It should sit in the system layer, not in a spreadsheet-based side process.

Dark Social Requires Proxy Attribution

Dark social is hard to measure because the share event happens in channels analytics platforms cannot see. You still need directional visibility, so instrument the edges:

- Dynamic UTM generation

- Referral codes tied to user cohorts

- Post-purchase surveys asking what triggered trust

- Customer support tagging for repeat mention patterns

This will never be perfectly observable. It does not need to be. It needs to be measurable enough to identify which communities produce your highest-LTV users.

Communities Reduce Future CAC

That is the compounding mechanism most brands miss. A healthy community lowers future acquisition cost because trust, recommendation, and product education are distributed across the network rather than purchased from an ad exchange every time.

Phygital Trust Shrinks Consumer Hesitation

Physical experience still matters because uncertainty still kills conversion. Packaging, in-store interactions, events, and product touchpoints all shape whether a customer believes the digital promise. The job is not to romanticize physical retail. The job is to remove friction systematically.

Physical Goods Should Open Digital Proof Paths

Embed NFC tags or dynamic QR codes into the product journey when the category benefits from trust reinforcement. A tap can route to a digital passport, sourcing proof, one-click reorder flow, care instructions, or priority support. That turns a physical object into a recurring interface.

Visible Trust Systems Beat Abstract Brand Messaging

Consumers respond to proof they can verify. In electric mobility, range anxiety did not disappear because brands talked more persuasively. It reduced when charging infrastructure became visible, reliable, and easy to access.

The same systems principle applies to retail and SaaS. If buyers hesitate because of delivery certainty, authenticity, onboarding complexity, service reach, or returns friction, build a visible trust layer that neutralizes the objection before the purchase decision stalls.

Friction Mapping Should Be an Operational Practice

List the top reasons a buyer delays purchase, then assign a system response to each one. Some objections need better information design. Others need a support workflow, local availability signal, reorder mechanism, or verifiable proof asset.

That is what trust looks like when it is engineered.

Agentic Orchestration Creates Operational Elasticity

Headcount-heavy scaling breaks margins because execution expands linearly while demand stays variable. Operational elasticity is the ability to expand or contract execution capacity without rebuilding the org chart every quarter.

Basic Trigger Automation Fails Under Complexity

Simple single-step flows are useful, but they fragment under branching logic, exception handling, memory, and multi-system coordination. Use orchestration layers that support loops, state, tool calls, and conditional routing when the workflow has real operational consequences.

Platforms like n8n are useful because they let engineering teams build developer-controlled automation graphs instead of stacking disconnected no-code triggers that nobody wants to debug under load.

The Administrative Layer Should Be Abstracted

Wire AI agents into internal systems where the work is repetitive but context-sensitive:

- Ticket triage and prioritization

- Inventory and catalog queries

- Personalized lifecycle messaging

- Internal knowledge retrieval

- Workflow execution across support, ops, and CRM layers

The goal is not novelty. The goal is reducing latency between signal detection and action.

Predictive Models Improve Inventory and Pricing Decisions

Feed historical sales, local demand patterns, seasonality, campaign effects, and community sentiment into forecasting models. That produces a practical advantage: leaner inventory, fewer stockouts, tighter pricing experiments, and fewer manual planning bottlenecks.

The Closed-Loop Brand Architecture

The systems view is straightforward once the layers are visible:

- The machine-readable data plane makes the brand legible to answer engines and agents.

- Hyperlocal trust creates a defensible node where precise fulfillment and reputation compound.

- Community-led loops convert customers into retention and referral infrastructure.

- Phygital trust bridges physical proof into digital confidence.

- Agentic orchestration expands operational capacity without linear cost growth.

The pieces reinforce each other. Better entity definition improves search visibility. Better local trust improves conversion quality. Better communities improve LTV and referral velocity. Better automation preserves margin when demand arrives.

That is why the right mental model is not “marketing strategy.” It is systems architecture for brand growth.

Source Signals and Further Reading

The data points and directional arguments in this piece align with the following reports, research articles, and industry analyses:

- McKinsey: Winning in the Age of AI Search

- McKinsey QuantumBlack: Seizing the Agentic AI Advantage

- Bain: India Electric Vehicle Report 2023

- SIAM Industry Release

- U.S. SBA Research Spotlight: AI in Business

- ONDC Success Stories

- Allianz Risk Barometer 2026: AI

- Harvard Business School: IFC India Electric Mobility

- ScienceDirect Research Article

- ICCT: Charging Infrastructure Report

- MDPI Applied Sciences Article

- SparkToro: Search Everywhere Optimization

- TIME: ONDC and Small Retailers in India

- Forbes: What Brands Need to Know About AEO

- HubSpot: AEO Guide

- Google Business: Digital Marketing Trends 2026

- MarketingProfs: How Small Businesses Are Using AI

- Sprout Social: Social Media Metrics and Discovery Data

The unanswered question is not whether AI will reshape brand growth. It already has. The real question is which brands will keep treating distribution as a channel problem, and which ones will rebuild the stack so growth compounds at the system level.